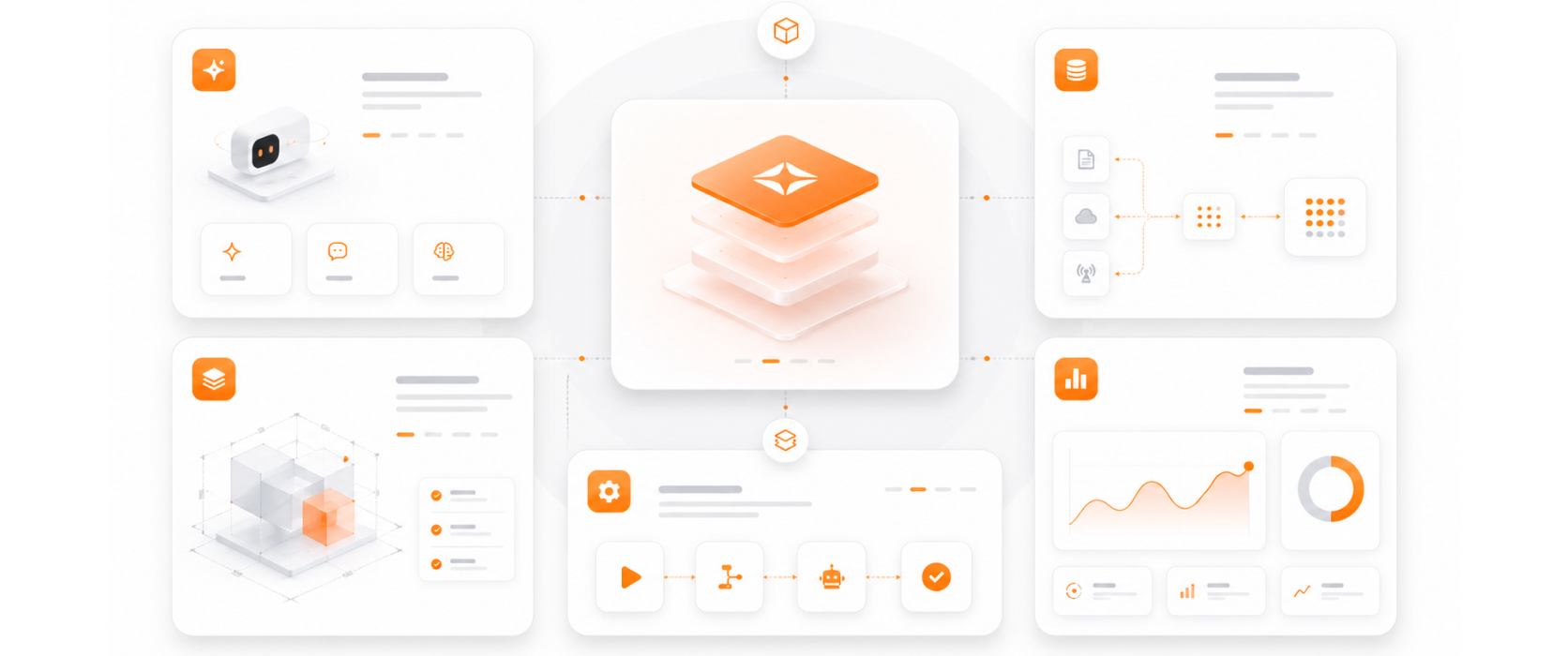

Our Argus QA evaluation platform has been deployed in production environments — it is a real product, not a theoretical framework.

Accuracy, hallucination, relevance, toxicity, faithfulness, and bias — we evaluate AI across the full spectrum of quality dimensions that enterprise deployment requires.

Evaluation frameworks designed specifically for RAG systems and AI agents — precision, recall, and faithfulness metrics calibrated for retrieval and generation.

Evaluation runs continuously in production — not just at launch. Quality trends are tracked over time, enabling proactive intervention before issues become visible to users.

Evaluation and observability are embedded into our Agent Factory delivery pipeline — every agent that exits the factory is instrumented for production observability.